Reliability

When the unexpected happens, when the weather badly degrades, when a sensor gets blocked, the embarked perception system should diagnose the situation and react accordingly, e.g., by calling an alternative system or the human driver. With this in mind, we investigate ways to improve the robustness of neural nets to input variations, including to adversarial attacks, and to predict automatically the performance and the confidence of their predictions as in ConfidNet at NeurIPS’19.

Publications

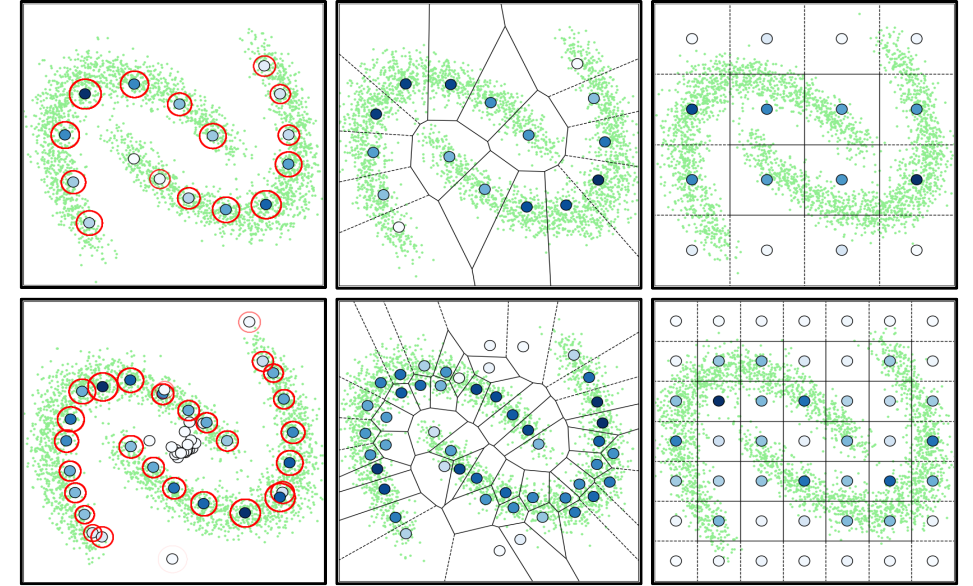

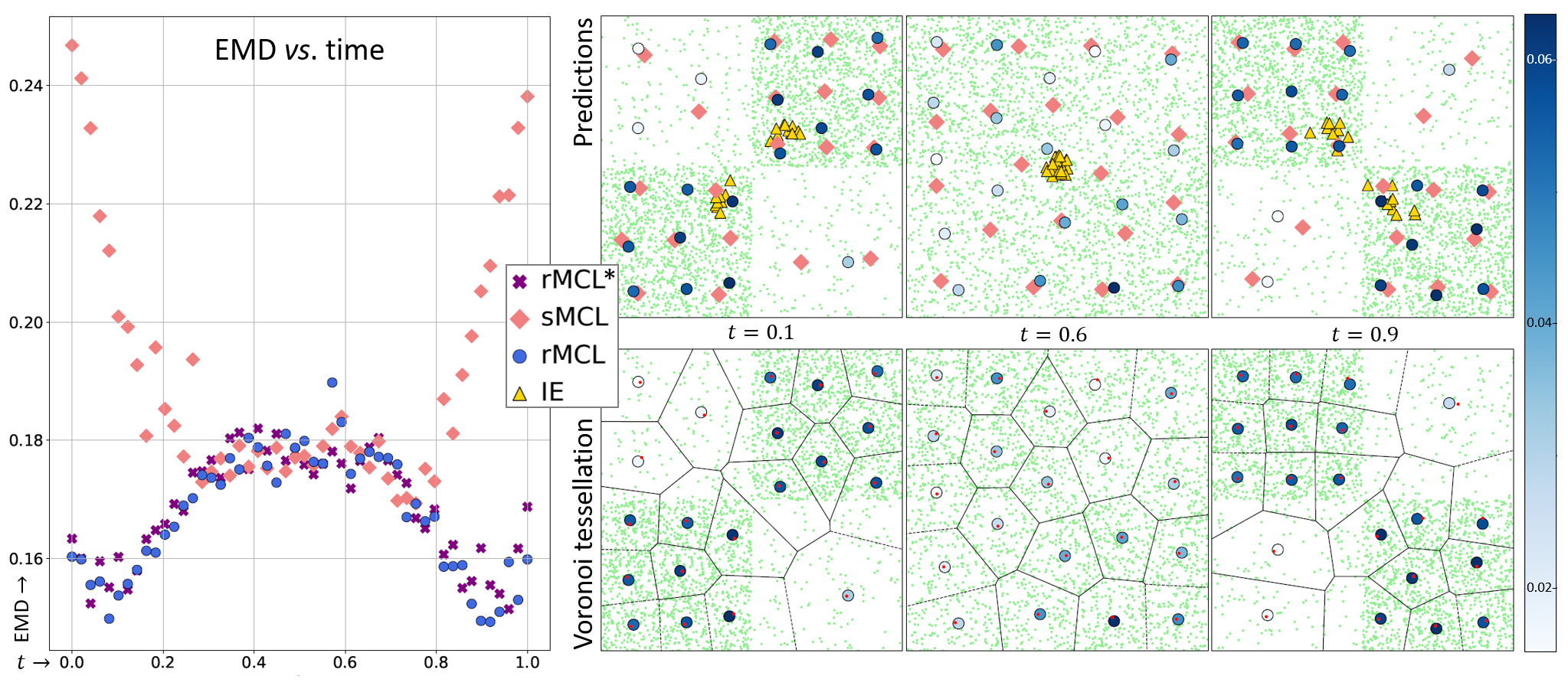

Winner-takes-all learners are geometry-aware conditional density estimators

Victor Letzelter, David Perera, Cédric Rommel, Mathieu Fontaine, Slim Essid, Gaël Richard, Patrick Pérez

International Conference On Machine Learning (ICML), 2024

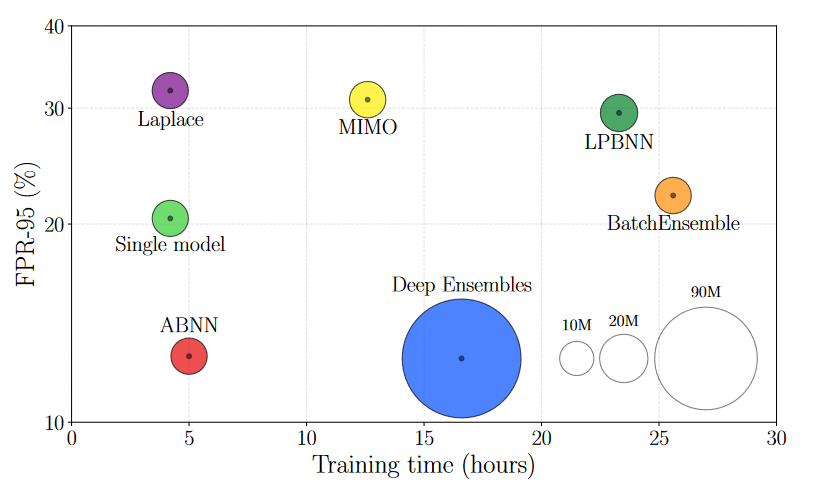

Make Me a BNN: A Simple Strategy for Estimating Bayesian Uncertainty from Pre-trained Models

Gianni Franchi, Olivier Laurent, Maxence Leguéry, Andrei Bursuc, Andrea Pilzer, Angela Yao

Computer Vision and Pattern Recognition (CVPR), 2024

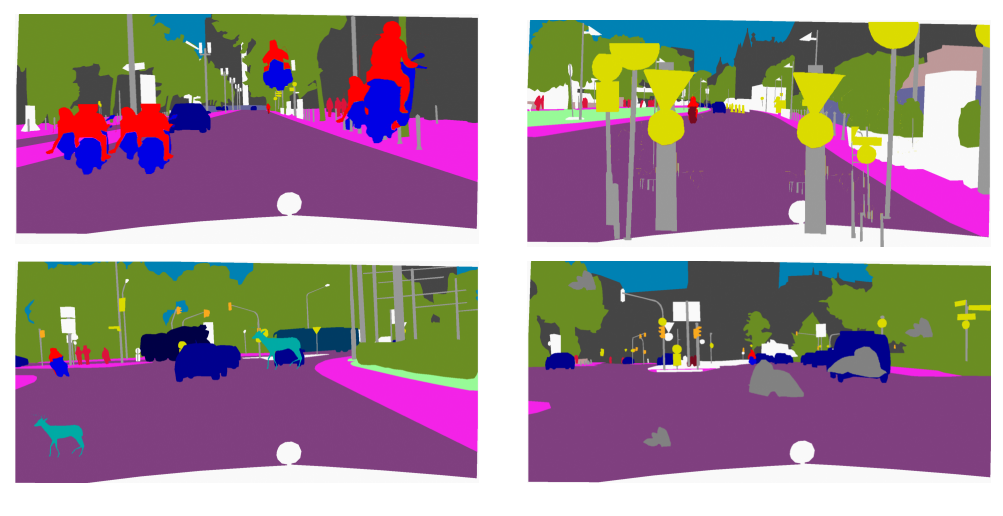

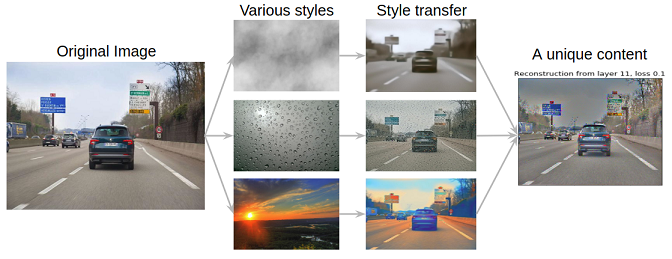

Learning to Generate Training Datasets for Robust Semantic Segmentation

Marwane Hariat, Olivier Laurent, Rémi Kazmierczak, Shihao Zhang, Andrei Bursuc, Angela Yao, Gianni Franchi

Winter Conference on Applications of Computer Vision (WACV), 2024

Resilient Multiple Choice Learning: A learned scoring scheme with application to audio scene analysis

Victor Letzelter, Mathieu Fontaine, Mickaël Chen, Patrick Pérez, Slim Essid, and Gaël Richard

Advances in Neural Information Processing Systems (NeurIPS), 2023

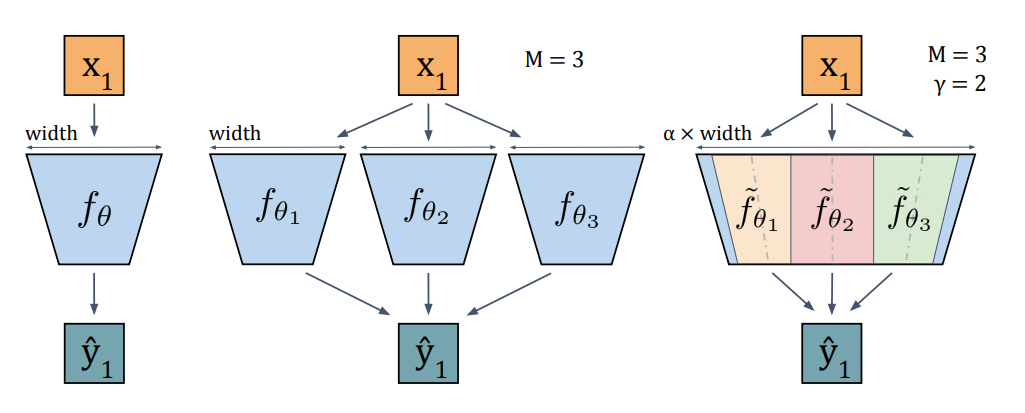

Packed Ensembles for efficient uncertainty estimation

Olivier Laurent, Adrien Lafage, Enzo Tartaglione, Geoffrey Daniel, Jean-Marc Martinez, Andrei Bursuc, and Gianni Franchi

International Conference on Learning Representations (ICLR), 2023

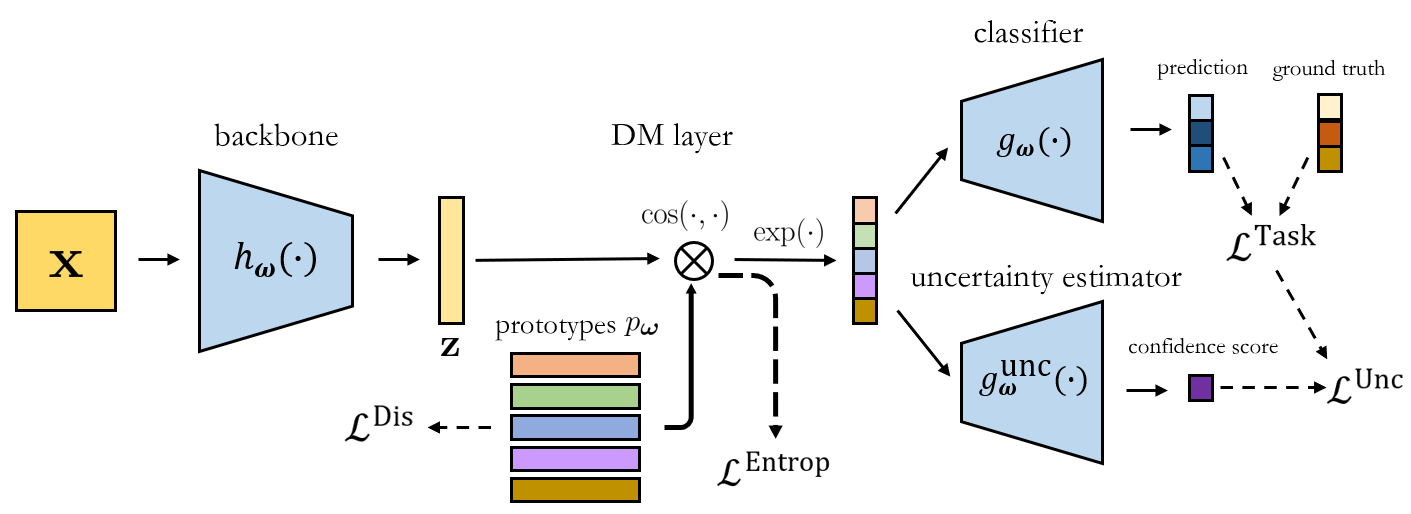

Latent Discriminant deterministic Uncertainty

Gianni Franchi, Andrei Bursuc, Emanuel Aldea, Severine Dubuisson, and David Filliat

European Conference on Computer Vision (ECCV), 2022

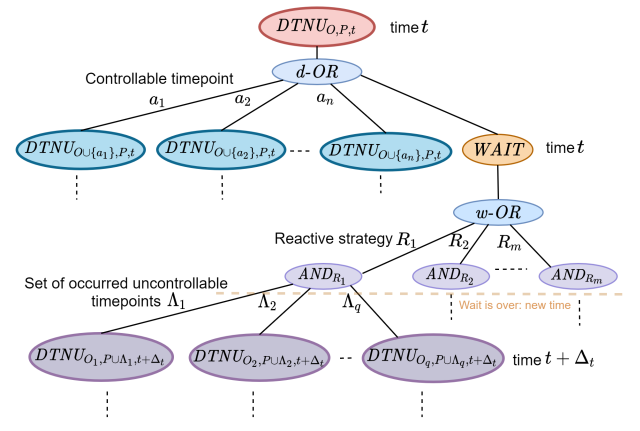

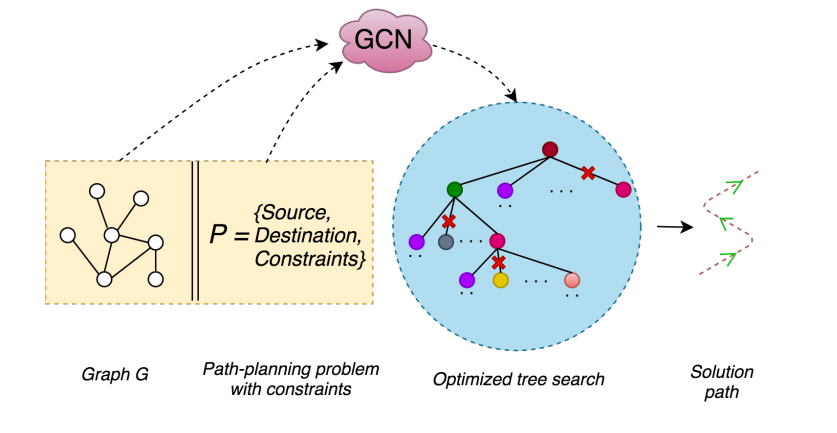

Solving Disjunctive Temporal Networks with Uncertainty under Restricted Time-Based Controllability using Tree Search and Graph Neural Networks

Kevin Osanlou, Jeremy Frank, J. Benton, Andrei Bursuc, Christophe Guettier, Tristan Cazenave and Eric Jacopin

AAAI Conference on Artificial Intelligence (AAAI), 2022

Robust Semantic Segmentation with Superpixel-Mix

Gianni Franchi, Nacim Belkhir, Mai Lan Ha, Yufei Hu, Andrei Bursuc, Volker Blanz, Angela Yao

British Machine Vision Conference (BMVC), 2021

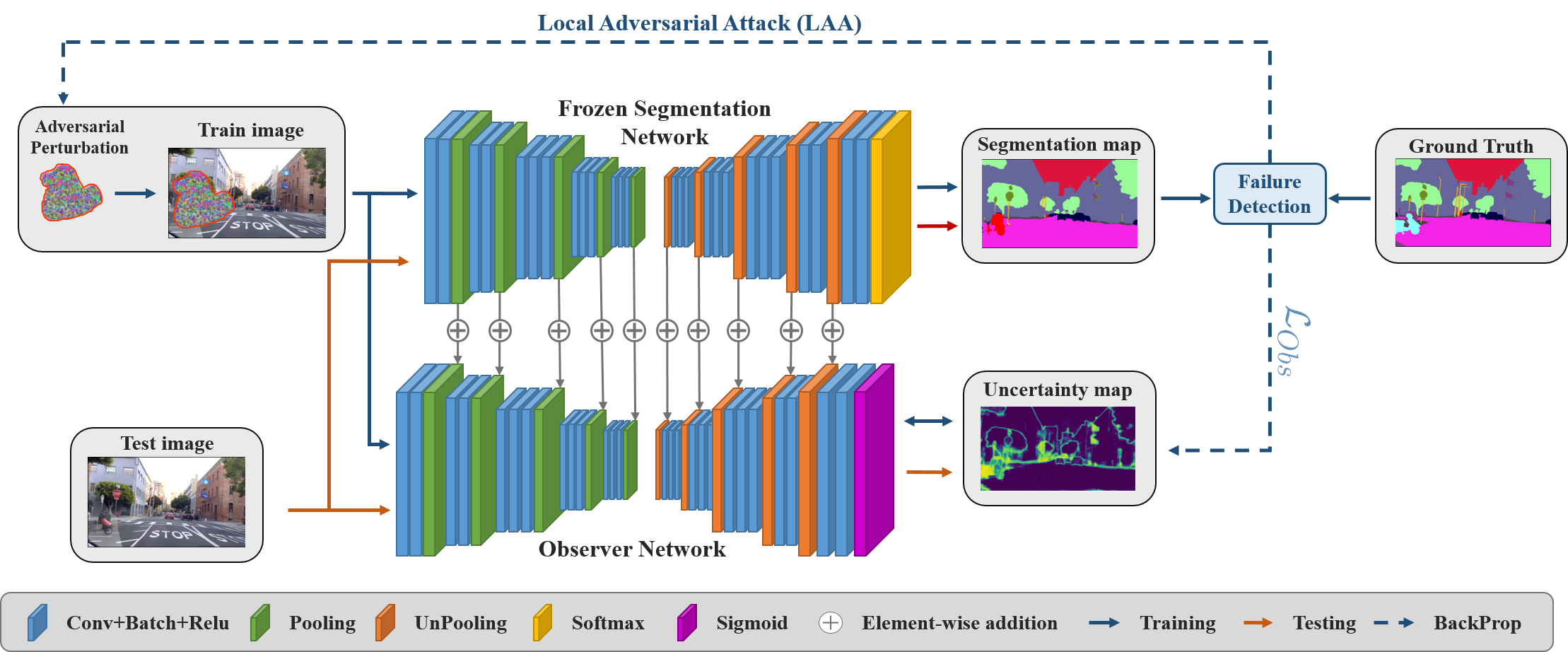

Triggering Failures: Out-Of-Distribution detection by learning from local adversarial attacks in Semantic Segmentation

Victor Besnier, Andrei Bursuc, Alexandre Briot, and David Picard

International Conference on Computer Vision (ICCV), 2021

StyleLess layer: Improving robustness for real-world driving

Julien Rebut, Andrei Bursuc, and Patrick Pérez

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2021

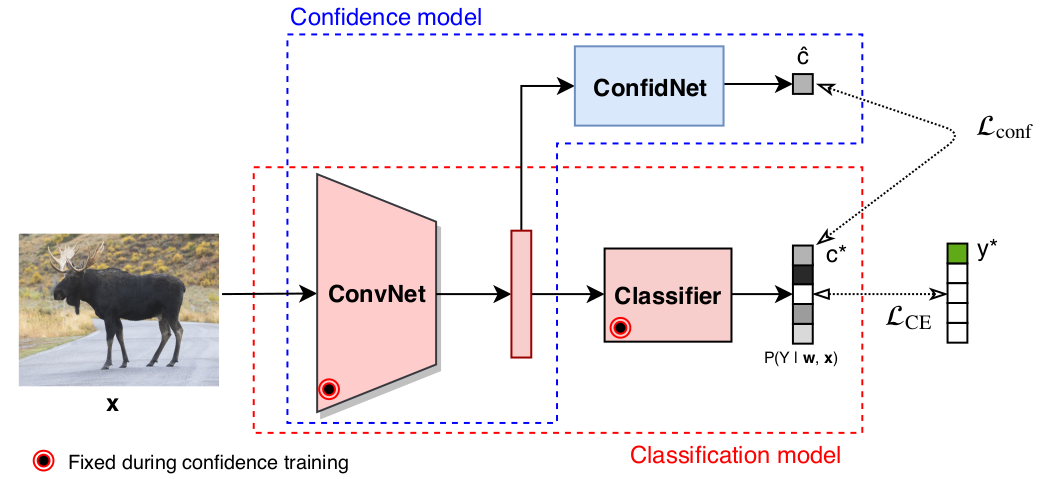

Confidence Estimation via Auxiliary Models

Charles Corbière, Nicolas Thome, Antoine Saporta, Tuan-Hung Vu, Matthieu Cord, and Patrick Pérez

IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), 2021

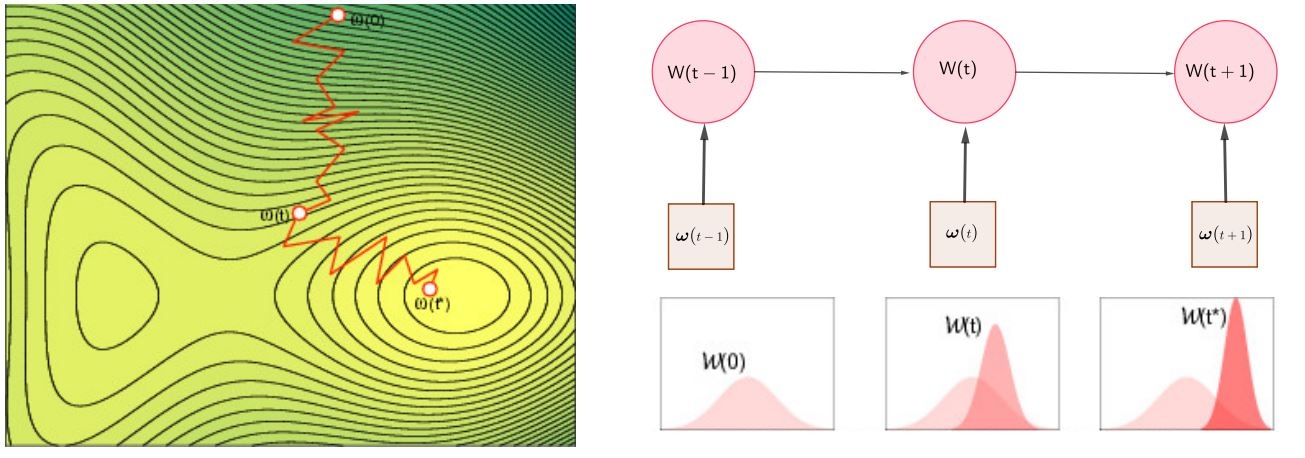

TRADI: Tracking deep neural network weight distributions for uncertainty estimation

Gianni Franchi, Andrei Bursuc, Emanuel Aldea, Severine Dubuisson, and Isabelle Bloch

European Conference on Computer Vision (ECCV), 2020

Addressing Failure Prediction by Learning Model Confidence

Charles Corbière, Nicolas Thome, Avner Bar-Hen, Matthieu Cord, and Patrick Pérez

Neural Information Processing Systems (NeurIPS), 2019

Optimal Solving of Constrained Path-Planning Problems with Graph Convolutional Networks and Optimized Tree Search

Kevin Osanlou, Andrei Bursuc, Christophe Guettier, Tristan Cazenave and Eric Jacopin

International Conference on Intelligent Robots and Systems (IROS), 2019