LiDARTouch: Monocular metric depth estimation with a few-beam LiDAR

Florent Bartoccioni Éloi Zablocki Patrick Pérez Matthieu Cord Karteek Alahari

CVIU 2022

Abstract

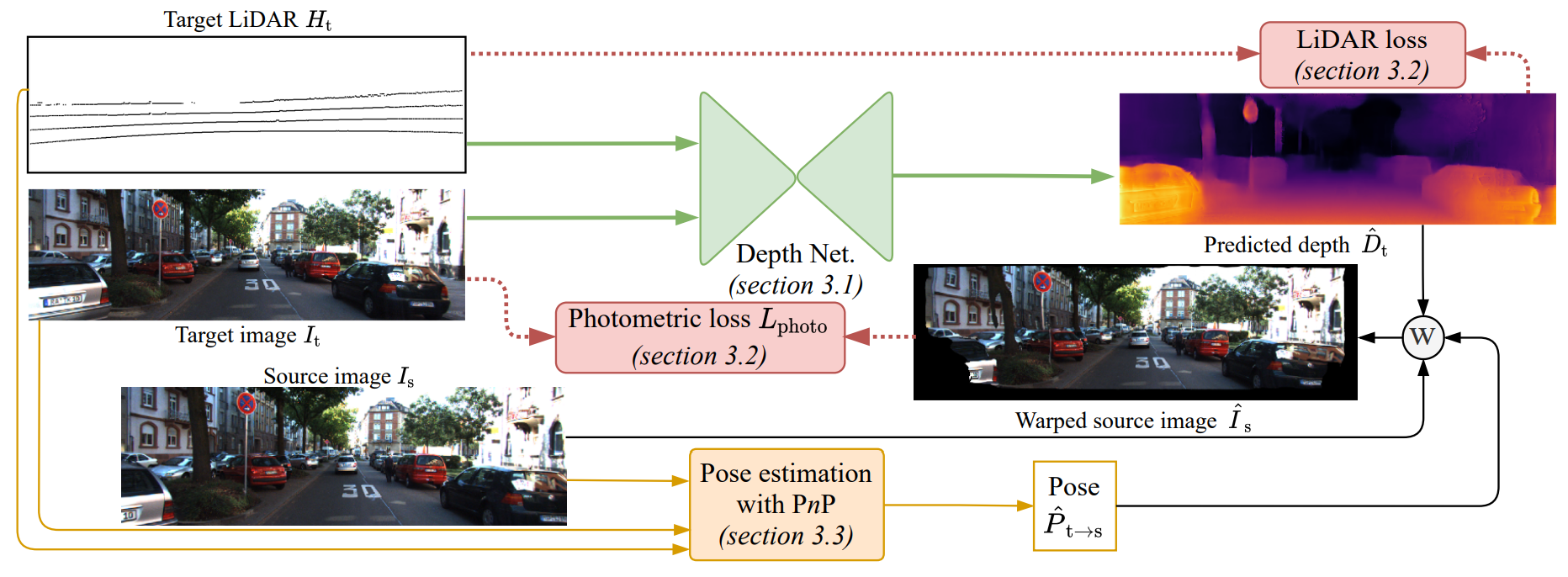

Vision-based depth estimation is a key feature in autonomous systems, which often relies on a single camera or several independent ones. In such a monocular setup, dense depth is obtained with either additional input from one or several expensive LiDARs, e.g., with 64 beams, or camera-only methods, which suffer from scale-ambiguity and infinite-depth problems. In this paper, we propose a new alternative of densely estimating metric depth by combining a monocular camera with a light-weight LiDAR, e.g., with 4 beams, typical of today's automotive-grade mass-produced laser scanners. Inspired by recent self-supervised methods, we introduce a novel framework, called LiDARTouch, to estimate dense depth maps from monocular images with the help of touches of LiDAR, i.e., without the need for dense ground-truth depth. In our setup, the minimal LiDAR input contributes on three different levels: as an additional model's input, in a self-supervised LiDAR reconstruction objective function, and to estimate changes of pose (a key component of self-supervised depth estimation architectures). Our LiDARTouch framework achieves new state of the art in self-supervised depth estimation on the KITTI dataset, thus supporting our choices of integrating the very sparse LiDAR signal with other visual features. Moreover, we show that the use of a few-beam LiDAR alleviates scale ambiguity and infinite-depth issues that camera-only methods suffer from. We also demonstrate that methods from the fully-supervised depth-completion literature can be adapted to a self-supervised regime with a minimal LiDAR signal.

BibTeX

@article{bartoccioni2022lidartouch,

author = {Florent Bartoccioni and

{\'{E}}loi Zablocki and

Patrick P{\'{e}}rez and

Matthieu Cord and

Karteek Alahari},

title = {LiDARTouch: Monocular metric depth estimation with a few-beam LiDAR},

journal = {Comput. Vis. Image Underst.},

year = {2022}

}