Train Till You Drop: Towards Stable and Robust Source-free Unsupervised 3D Domain Adaptation

Bjoern Michele Alexandre Boulch Tuan-Hung Vu Gilles Puy Renaud Marlet Nicolas Courty

ECCV 2024

Abstract

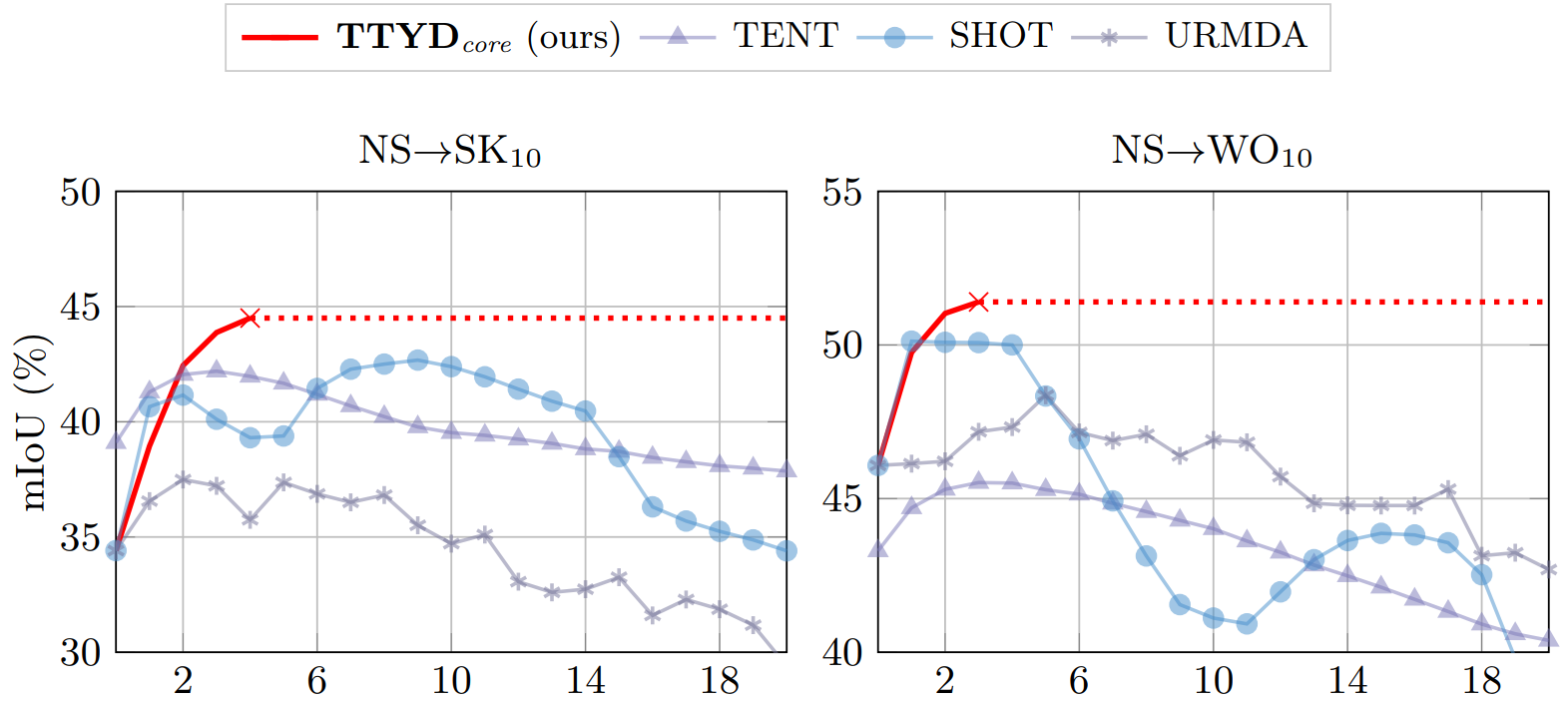

We tackle the challenging problem of source-free unsupervised domain adaptation (SFUDA) for 3D semantic segmentation. It amounts to performing domain adaptation on an unlabeled target domain without any access to source data; the available information is a model trained to achieve good performance on the source domain. A common issue with existing SFUDA approaches is that performance degrades after some training time, which is a by-product of an under-constrained and ill-posed problem. We discuss two strategies to alleviate this issue. First, we propose a sensible way to regularize the learning problem. Second, we introduce a novel criterion based on agreement with a reference model. It is used (1) to stop the training when appropriate and (2) as validator to select hyperparameters without any knowledge on the target domain. Our contributions are easy to implement and readily amenable for all SFUDA methods, ensuring stable improvements over all baselines. We validate our findings on various 3D lidar settings, achieving state-of-the-art performance.

BibTeX

@inproceedings{michele2024ttyd,

title={Train Till You Drop: Towards Stable and Robust Source-free Unsupervised 3D Domain Adaptation},

author={Michele, Bjoern and Boulch, Alexandre and Vu, Tuan-Hung Puy, Gilles and and Marlet, Renaud and Courty, Nicolas},

year={2024},

booktitle={European Conference on Computer Vision}

}