NeeDrop: Unsupervised Shape Representation from Sparse Point Clouds using Needle Dropping

Alexandre Boulch Pierre-Alain Langlois Gilles Puy Renaud Marlet

3DV 2021

Abstract

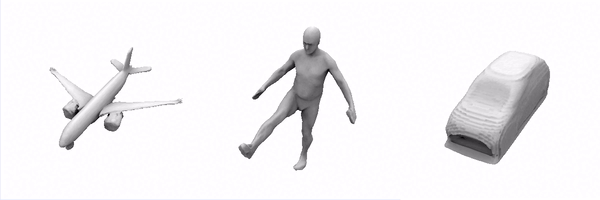

There has been recently a growing interest for implicit shape representations. Contrary to explicit representations, they have no resolution limitations and they easily deal with a wide variety of surface topologies. To learn these implicit representations, current approaches rely on a certain level of shape supervision (e.g., inside/outside information or distance-to-shape knowledge), or at least require a dense point cloud (to approximate well enough the distance-to-shape). In contrast, we introduce NeeDrop, a self-supervised method for learning shape representations from possibly extremely sparse point clouds. Like in Buffon’s needle problem, we “drop” (sample) needles on the point cloud and consider that, statistically, close to the surface, the needle end points lie on opposite sides of the surface. No shape knowledge is required and the point cloud can be highly sparse, e.g., as lidar point clouds acquired by vehicles. Previous self-supervised shape representation approaches fail to produce good-quality results on this kind of data. We obtain quantitative results on par with existing supervised approaches on shape reconstruction datasets and show promising qualitative results on hard autonomous driving datasets such as KITTI.

BibTeX

@inproceedings{boulch2021needrop,

title={NeeDrop: Self-supervised Shape Representation from Sparse Point Clouds using Needle Dropping},

author={Boulch, Alexandre and Langlois, Pierre-Alain and Puy, Gilles and Marlet, Renaud},

booktitle={International Conference on 3D Vision (3DV)},

year={2021}

}