Annealed Multiple Choice Learning: Overcoming limitations of Winner-takes-all with annealing

David Perera Victor Letzelter Théo Mariotte Adrien Cortés Mickael Chen Slim Essid Gaël Richard

NeurIPS 2024

Abstract

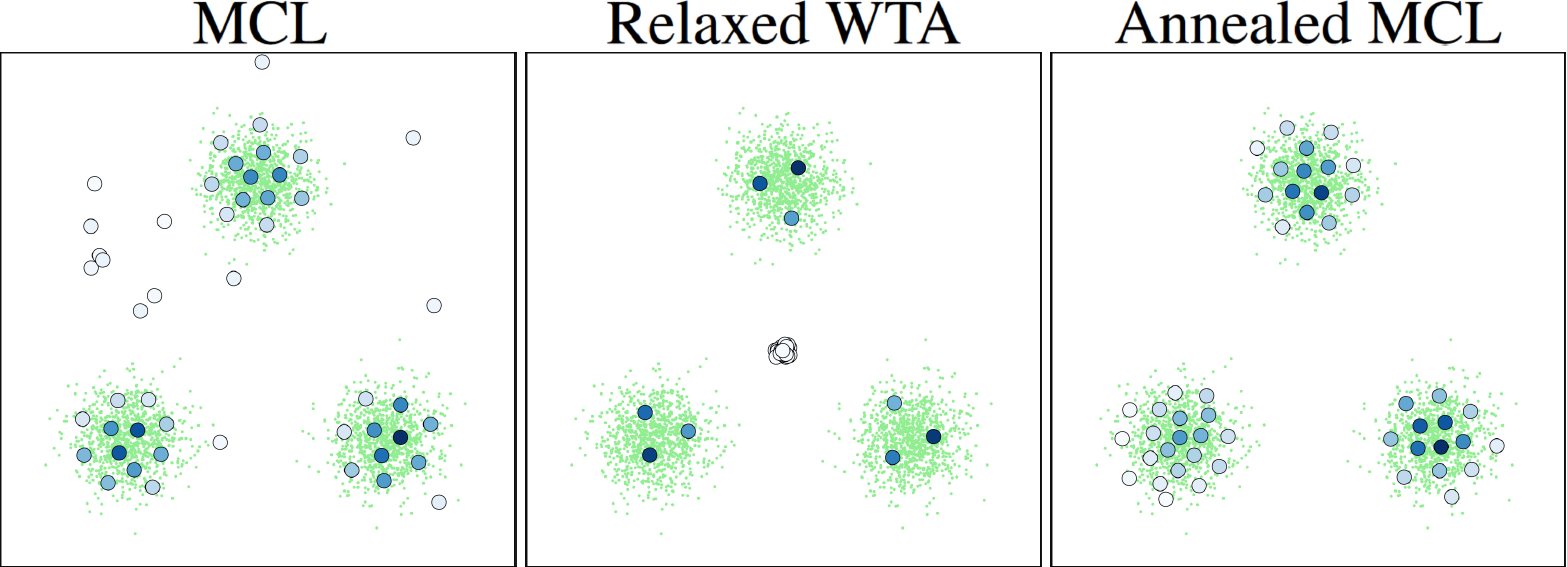

We introduce Annealed Multiple Choice Learning (aMCL) which combines simulated annealing with MCL. MCL is a learning framework handling ambiguous tasks by predicting a small set of plausible hypotheses. These hypotheses are trained using the Winner-takes-all (WTA) scheme, which promotes the diversity of the predictions. However, this scheme may converge toward an arbitrarily suboptimal local minimum, due to the greedy nature of WTA. We overcome this limitation using annealing, which enhances the exploration of the hypothesis space during training. We leverage insights from statistical physics and information theory to provide a detailed description of the model training trajectory. Additionally, we validate our algorithm by extensive experiments on synthetic datasets, on the standard UCI benchmark, and on speech separation.

BibTeX

@inproceedings{amcl,

title={Annealed Multiple Choice Learning: Overcoming limitations of Winner-takes-all with annealing},

author={Perera, David and Letzelter, Victor and Mariotte, Th{\'e}o and Cort{\'e}s, Adrien and Chen, Mickael and Essid, Slim and Richard, Ga{\"e}l},

booktitle={Advances in Neural Information Processing Systems},

year={2024}

}