Research

Our work spans four intertwined axes. Click any axis to browse matching publications.

3D Perception and Scene Understanding →

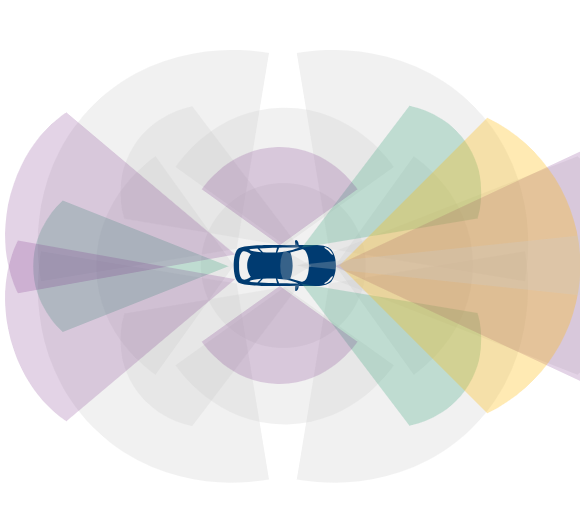

Autonomous vehicles rely on cameras, LiDARs, radars, and ultrasonics to perceive their surroundings. We study how to fuse and interpret these multi-modal signals to build accurate 3D representations of the driving scene — object detection, semantic segmentation, depth estimation, motion forecasting, and pose estimation.

Recent key works

- R3DPA: Leveraging 3D Representation Alignment and RGB Pretrained Priors for LiDAR Scene Generation

- OccAny: Generalized Unconstrained Urban 3D Occupancy

- LOSC: LiDAR Open-voc Segmentation Consolidator

- LiDAS: Lighting-driven Dynamic Active Sensing for Nighttime Perception

Foundation Models and World Modeling →

Large-scale pretrained models can go beyond fixed ontologies and adapt to a wide variety of downstream tasks. We investigate vision and vision-language foundation models, as well as world models that learn to simulate and predict how driving scenes evolve over time — enabling better generalization with less task-specific supervision.

Recent key works

- StableMTL: Repurposing Latent Diffusion Models for Multi-Task Learning from Partially Annotated Synthetic Datasets

- OccAny: Generalized Unconstrained Urban 3D Occupancy

- NAF: Zero-Shot Feature Upsampling via Neighborhood Attention Filtering

- MAD: Motion Appearance Decoupling for efficient Driving World Models

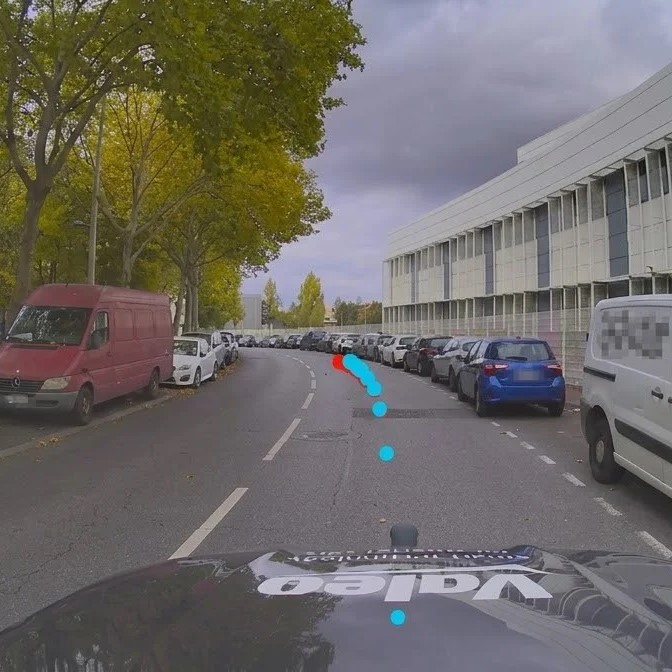

Physical AI and End-to-End Planning →

Rather than treating perception and decision-making as separate modules, end-to-end approaches learn to map sensor inputs directly to driving actions. We explore neural planning architectures and physical AI methods that reason jointly about scene understanding and trajectory planning — aiming for driving systems that are both simpler and more effective.

Robust, Reliable and Explainable Models →

Safety-critical applications demand models that are resilient to distribution shifts, adverse conditions, and unexpected inputs. We work on uncertainty estimation, domain generalization, robustness to corruptions and adversarial perturbations, and explainability methods that help understand and trust the decisions made by deep learning systems.

Recent key works

- Multiple Choice Learning of Low-Rank Adapters for Language Modeling

- Attention, May I Have Your Decision? Localizing Generative Choices in Diffusion Models

- DRIV-EX: Counterfactual Explanations for Driving LLMs

- GIFT: A Framework for Global Interpretable Faithful Textual Explanations of Vision Classifiers