LiDAS: Lighting-driven Dynamic Active Sensing for Nighttime Perception

Simon de Moreau Andrei Bursuc Hafid El-Idrissi Fabien Moutarde

CVPR 2026

Abstract

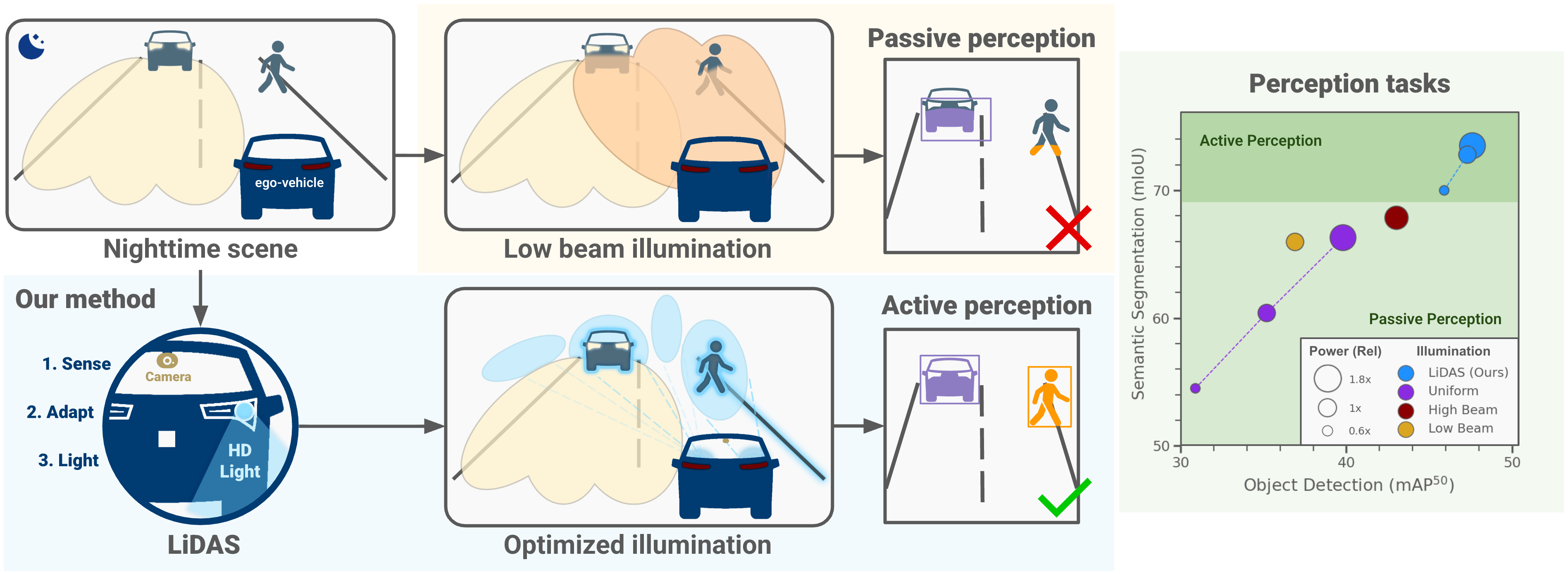

Camera-based perception in autonomous driving suffers a steep performance drop at night, when low light degrades image quality and existing solutions either rely on costly hardware upgrades or post-hoc image enhancement. We introduce LiDAS, a closed-loop active illumination system that dynamically predicts the optimal lighting pattern for visual perception, concentrating light on objects of interest while reducing it in empty regions. LiDAS integrates seamlessly with standard perception models and high-definition headlights, enabling zero-shot nighttime generalization of daytime-trained networks. On a realistic nighttime simulator and on real driving sequences, LiDAS yields +18.7% mAP50 and +5.0% mIoU over standard low-beam at equal power, while also enabling up to 40% energy savings at matched performance. Our approach complements domain-generalization methods and turns commodity headlights into active perception devices, paving the way for robust nighttime autonomous perception.

BibTeX

@inproceedings{demoreau2026lidas,

title={LiDAS: Lighting-driven Dynamic Active Sensing for Nighttime Perception},

author={de Moreau, Simon and Bursuc, Andrei and El-Idrissi, Hafid and Moutarde, Fabien},

booktitle={CVPR},

year={2026}

}