Cross-task Attention Mechanism for Dense Multi-task Learning

Ivan Lopes Tuan-Hung Vu Raoul de Charette

WACV 2023

Abstract

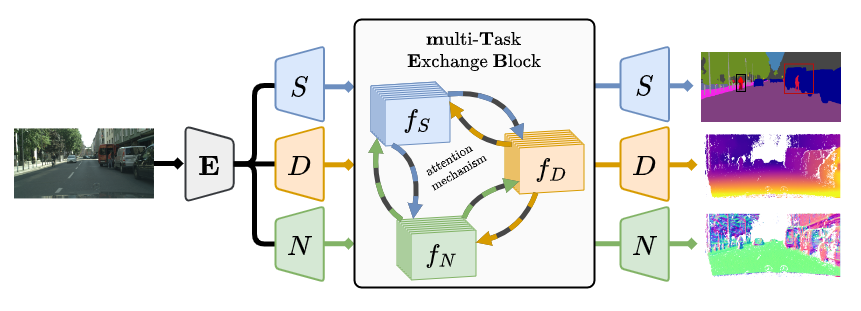

Multi-task learning has recently become a promising solution for a comprehensive understanding of complex scenes. Not only being memory-efficient, multi-task models with an appropriate design can favor exchange of complementary signals across tasks. In this work, we jointly address 2D semantic segmentation, and two geometry-related tasks, namely dense depth, surface normal estimation as well as edge estimation showing their benefit on indoor and outdoor datasets. We propose a novel multi-task learning architecture that exploits pair-wise cross-task exchange through correlation-guided attention and self-attention to enhance the average representation learning for all tasks. We conduct extensive experiments considering three multi-task setups, showing the benefit of our proposal in comparison to competitive baselines in both synthetic and real benchmarks. We also extend our method to the novel multi-task unsupervised domain adaptation setting. Our code is available at https://github.com/cv-rits/DenseMTL.

BibTeX

@inproceedings{lopes2023densemtl,

title={Cross-task Attention Mechanism for Dense Multi-task Learning},

author={Lopes, Ivan and Vu, Tuan-Hung and De Charette, Raoul},

booktitle={Proceedings of IEEE/CVF Winter Conference on Applications of Computer Vision},

year={2023}

}